The technical goal of any calibration related effort is t0 achieve a meaningful and valid CMP. According to The International Union of Pure and Applied Chemistry (IUPAC):

Chemical Measurement Process (CMP): An analytical method of defined structure that has been brought into a state of statistical control, such that its imprecision and bias are fixed, given the measurement conditions. This is prerequisite for the evaluation of the performing characteristics of the method, or the development of meaningful uncertainty statements concerning analytical results.

Per IUPAC:

Calibration Function: The functional (not statistical) relationship for the chemical measurement process, relating the expected value of the observed (gross) signal or response variable to the analyte amount . The corresponding graphical display for a single analyte is referred to as the calibration curve. When extended to additional variables or analytes which occur in multicomponent analysis, the ‘curve’ becomes a calibration surface or hypersurface.

Say What???

Every analytical measurement device has to be taught how to do its job. When it is born, it is born deaf and dumb. It knows nothing. It has to be taught right from wrong. It is this teaching right from wrong that is part what we refer to in the courtroom as simply “calibration.”

Before we get to what is calibration and how it is best performed, we have to get to our old friend chromatography. Chromatography is the science of separation of different things in analytical chemistry. If we do not have a “good” qualitative result, then we cannot possibly have a meaningful quantitative result. We seek the holy grail: Specificity!

For example, in courtrooms all across the United States perhaps upwards of a hundred times per day or more, Gas Chromatography with Flame Ionization (GC-FID) results are reported as specific results. To prove specificity there is no magic number that any laboratory has to use in their volatile mix. The most that they can say in the case of blood ethanol analysis, if done correctly, is that EtOH has been separated from whatever else is in that mix. If the laboratory wants to put in two compounds in their separation mix, then that is their choice. But when they only put in two compounds in their separation matrix, they cannot come into court and legitimately say that the laboratory proved that it has identified ethanol exclusively (specifically). In that instance, the most that the laboratory can say is that the EtOH is not the other compound as proven by the separation.

In GC-FID, it is all about what level of risk of being wrong they are willing to assume in the design of their chromatographic conditions and what is in their separation matrix. The more compounds in the separation matrix that show true separation from the target analyte, the more they have proved separation from those compounds. If it isn’t in your mix, you haven’t proven separation. If there is scientific integrity in a laboratory, they will admit that they never get to true proof of specificity using GC-FID. It is beyond the capabilities of the technology.

So again, without specificity, we can have no confidence in any quantitative result that follows.

Calibration versus calibration check

There is a large difference between the scientific terms “calibration” and “calibration check.” The act of calibration in essence is “wiping the slate clean” of all prior “teaching” of the machine its quantitation measurement (how much), and then “re-teaching” the machine through a series of actions its quantitation that can later be used to infer quantitation for subsequent testing of unknowns (e.g., a motorist’s BrAC).

In order for there to be confidence and validity of any measurement, the “teaching” of the machine its quantitation through the act of calibration must be based upon the use of Certified Reference Materials (CRMs) as defined by the International Organization for Standardization (ISO) Guides.

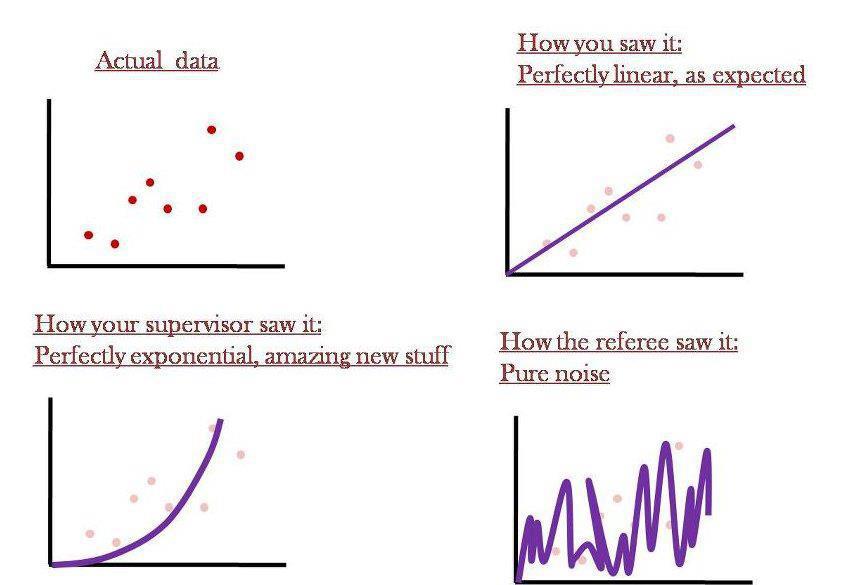

Further calibration is best performed in a 5×5 method covering a suitable linear dynamic range where machine operators might expect results of the unknowns. The 5×5 method refers to five different concentrations tested five different times resulting in 25 distinct measurements. Zero cannot be a data point in the act of calibration as we are incapable of measuring the total and complete absence of every atom, the experts stated. The calibration of a machine requires metrologically meaningful and robust statistical analysis of the results to determine the linearity of the detector.

By doing this 5×5 method through a suitable linear dynamic range with statistical evaluation of the results as a minimum means of calibration, we can meaningfully evaluate both precision (calibration or systemic error) and bias (accuracy or lack of trueness). It is in this manner that one can be capable of fairly and validly evaluating unknowns.

This is to be distinguished from a “calibration check.” A calibration check is a “quick and dirty” method to check on the calibration of the device. The key distinguishing feature is that with a calibration check, even if there is totally verified bias or lack of precision, the device is never adjusted or re-calibrated so long as the results of the calibration check meet some sort of arbitrary “acceptance criteria.” Even if bias is found by the calibration checks, the result of the later unknowns that are tested are never corrected or adjusted to account for this known error.

This 5×5 method is the minimum scientific method for calibration.

Other models

In fact, the Scientific Working Group for Forensic Toxicology (SWGTOX) Doc 003 Revision DRAFT Out for Public Comment – June 18, 2012 reads:

The calibrator samples shall span the range of concentrations expected. At least six different non-zero concentration levels shall be used to establish the calibration model. The concentration levels shall be appropriately spaced across the calibration range. A minimum of five replicates per concentration level is required and the combined data are used to establish the calibration model. The replicates to establish the calibration model shall be in separate runs. The origin shall not be included as a calibration point. Once the calibration model is established, it shall not be arbitrarily changed to achieve acceptable results during a given analytical run. For example, one shall not switch from an unweighted linear model to a weighted linear model in order to adjust for changes in instrument performance. Although it has become widespread practice, it is emphasized that a calibration model cannot be evaluated simply via its coefficient of correlation…. Once the calibration model has been established, fewer calibration samples (i.e., fewer levels or single/fewer replicates) may be used for routine analysis. However, if fewer calibration samples are chosen, they must 1) include the lowest and highest calibration levels used to establish the model, 2) include no fewer than four non-zero calibration points, and 3) must be used for the accuracy and precision studies of the validation. http://www.swgtox.org/documents/Validation_public_comment.pdf (last accessed May 8, 2013).

IUPAC advises for method validation purposes, the use of six or more calibration standards should be run, preferably at least in triplicate, in a randomized way. “Harmonized Guidelines for Single-Laboratory Validation of Methods of Analysis, IUPAC Technical Report 2002” (prepared by M. Thompson, S.L.R. Ellison and R. Wood), Pure Appl. Chem. 74 (2002) 835. ISO 8466 states that for an initial assessment of the calibration performance, the laboratory should employ at least five calibration standards, although it recommends 10, and 10 replicates of the lowest and highest standards. ISO 8466, Water Quality—Calibration and Evaluation of Analytical Methods and Estimation of Performance Characteristics (Parts 1 and 2), International Organization for Standardization (ISO), Geneva, 1990 and 1993.